- My Forums

- Tiger Rant

- LSU Recruiting

- SEC Rant

- Saints Talk

- Pelicans Talk

- More Sports Board

- Winter Olympics

- Fantasy Sports

- Golf Board

- Soccer Board

- O-T Lounge

- Tech Board

- Home/Garden Board

- Outdoor Board

- Health/Fitness Board

- Movie/TV Board

- Book Board

- Music Board

- Political Talk

- Money Talk

- Fark Board

- Gaming Board

- Travel Board

- Food/Drink Board

- Ticket Exchange

- TD Help Board

Customize My Forums- View All Forums

- Show Left Links

- Topic Sort Options

- Trending Topics

- Recent Topics

- Active Topics

Started By

Message

Help me understand AI

Posted on 10/15/23 at 11:45 am

Posted on 10/15/23 at 11:45 am

Does AI collect images from around the web and piece it together into a new image or is every element generated? For example, would you ever see yourself in an image generated by AI? Is every person, building fake unless you specify like, say the White House?

Posted on 10/15/23 at 11:46 am to Bamafig

quote:

Does AI collect images from around the web and piece it together into a new image or is every element generated?

No, they are fed with information in order to create a pattern of behavior.

quote:

For example, would you ever see yourself in an image generated by AI?

No

quote:

Is every person, building fake unless you specify like, say the White House?

Yes

Posted on 10/15/23 at 11:48 am to Bamafig

I believe it is more like me asking you to draw a pic of the white house or of "the president"

You would have to use your existing knowledge of what those two things look like to make the image as your remember.

Only AI has a better more detailed memory and is better at drawing.

You would have to use your existing knowledge of what those two things look like to make the image as your remember.

Only AI has a better more detailed memory and is better at drawing.

This post was edited on 10/15/23 at 11:49 am

Posted on 10/15/23 at 12:00 pm to fightin tigers

quote:

Only AI has a better more detailed memory and is better at drawing.

Everything but hands. And eyes.

Posted on 10/15/23 at 12:01 pm to Bamafig

There are two main pieces to AI

1. The program/code.

2. The library/database

Your result will be influenced by how good the first is and how large the second is.

Which brings up the future of AI. Who ever programs the code and/or selects the data, can steer the outcome.

1. The program/code.

2. The library/database

Your result will be influenced by how good the first is and how large the second is.

Which brings up the future of AI. Who ever programs the code and/or selects the data, can steer the outcome.

This post was edited on 10/15/23 at 12:04 pm

Posted on 10/15/23 at 12:03 pm to Bamafig

For the most part, it's a very clever trick. Computers have always been able to spot patterns and predict what hypothetical things would look like based on that. They just have a lot more data to work with now, and more power to do the work.

AI doesn't really "know" anything. That's how you end up with a shockingly good rendition of, IDK, "Neil Armstrong planting an LSU flag on Mars" but with a "Z" instead of an "S."

I will say, the youngsters who don't take the "AI can write code" hype seriously and get CS degrees in spite of it will make a lot of money... just as those of us who disregarded the noise of 30 years ago about Indian programmers have.

AI doesn't really "know" anything. That's how you end up with a shockingly good rendition of, IDK, "Neil Armstrong planting an LSU flag on Mars" but with a "Z" instead of an "S."

I will say, the youngsters who don't take the "AI can write code" hype seriously and get CS degrees in spite of it will make a lot of money... just as those of us who disregarded the noise of 30 years ago about Indian programmers have.

Posted on 10/15/23 at 12:04 pm to JperiodCperiod

I wonder if the hand thing is intentional at this point. I remember that GPS originally had a buffer zone added for civilian use. Idk

Posted on 10/15/23 at 12:04 pm to Bamafig

Who could have predicted that Americans would be captivated by the cute little pictures that AI creates to the point that they wholesale ignore the threat that AI (even in its current form) actually is? It is the perfect representation of where we are as a society these days.

"Look! Shiny new toy!"

"Look! Shiny new toy!"

This post was edited on 10/15/23 at 12:15 pm

Posted on 10/15/23 at 12:07 pm to Bamafig

I foresee ( and it may already be the case) glasses with built in filters so that you can be with an 4 and filtered to look like a 10. A real life Shallow Hal scenario.

This post was edited on 10/15/23 at 12:11 pm

Posted on 10/15/23 at 12:14 pm to JperiodCperiod

quote:

There are two main pieces to AI

1. The program/code.

2. The library/database

Your result will be influenced by how good the first is and how large the second is.

Which brings up the future of AI. Who ever programs the code and/or selects the data, can steer the outcome.

Posted on 10/15/23 at 12:30 pm to Bamafig

quote:

Does AI collect images from around the web and piece it together into a new image or is every element generated? For example, would you ever see yourself in an image generated by AI? Is every person, building fake unless you specify like, say the White House?

The answer is that's not quite how these things work. They train on tons of data, much of it from the Internet. But they don't store these as individual documents or photos. It stores the patterns it finds like what words typically come before and after other words. Or what people, places and things are in different types of photos.

quote:

For example, would you ever see yourself in an image generated by AI?

Are you famous enough to figure prominently in its training data? It does well with pictures of celebrities. But again, they aren't storing images in a typical database and just pulling faces out of a hat. They "draw" the image from a starting point of noise, so if it ends up drawing you, that's either coincidence, or you figured prominently in its training data, like celebrities would be in a lot more photos than nobodies.

This article has a pretty good laymen's terms explanation of the process for images. LINK

Posted on 10/15/23 at 12:31 pm to Bamafig

We’re not in true AI. It’s essentially just cobbling the entire internet as a database minus whatever the AI company wants to eliminate.

Posted on 10/15/23 at 1:23 pm to Bamafig

quote:

Does AI collect images from around the web and piece it together into a new image or is every element generated?

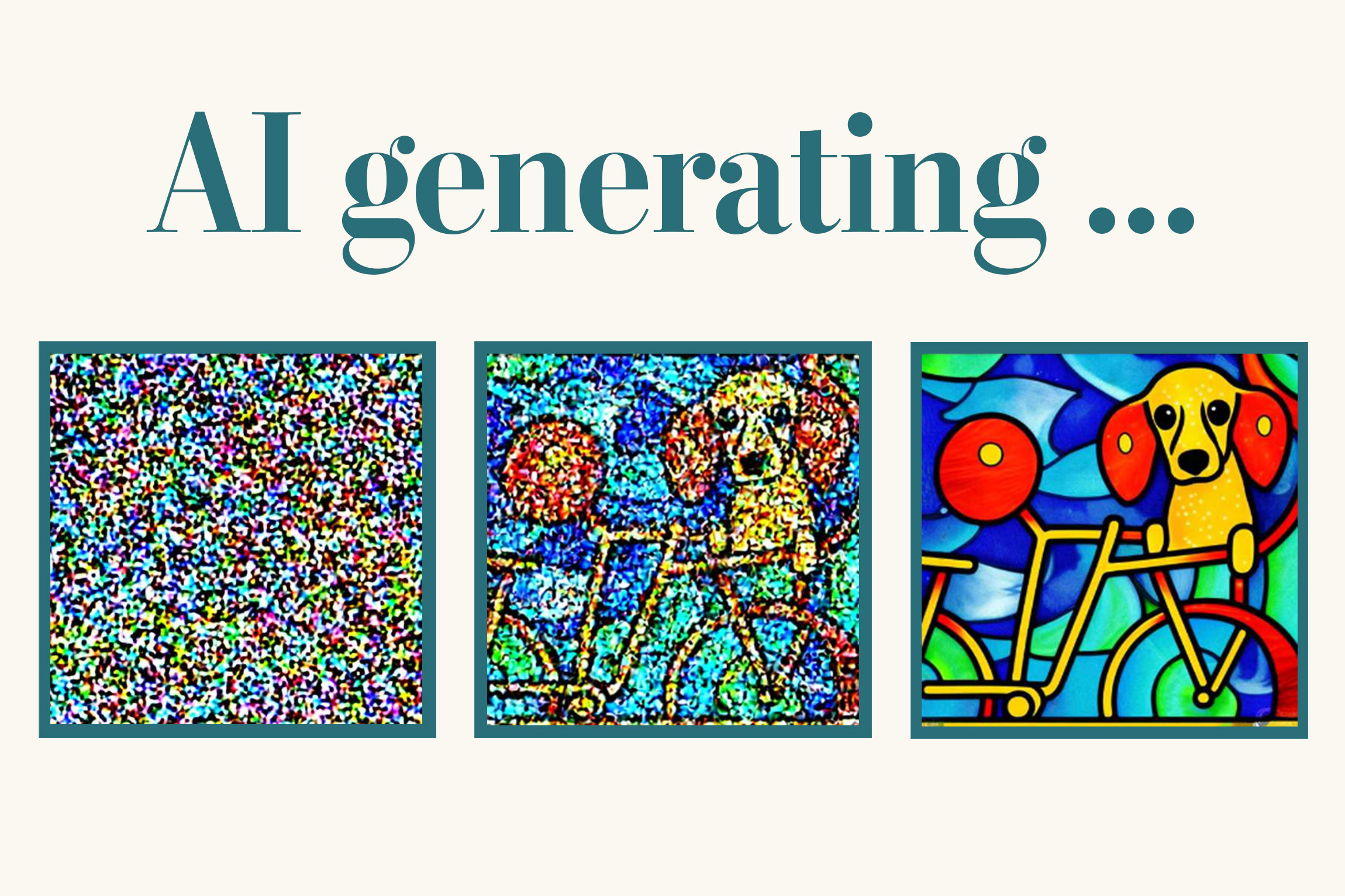

So the first thing to know is that there are different types of AI image generators. Right now, diffusion models are the most successful. But that may change one day. I bring this up because some of what I’m about to say may not apply to other types of models.

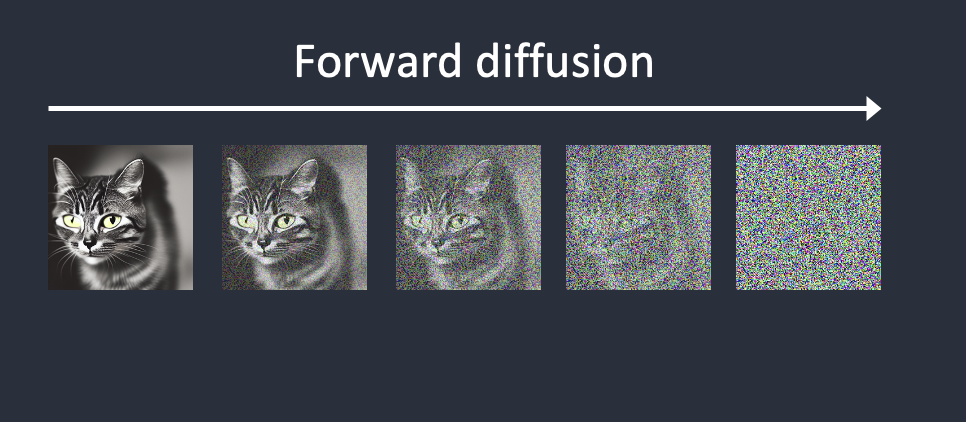

Diffusion models are neural networks (read: AI’s) that learn to create images from noise. Or more accurately - they learn to differentiate noise from an image. They are trained by taking a complete image and slowly adding random noise to it, like this:

During training, the model’s goal is to look at the image and estimate the noise that was added. After many rounds of training you’re left with a model that’s capable of looking at a noisy image and determining which parts are noise.

Then during actual image generation (referred to as inference) the model does the exact opposite. It starts with an image that consists of pure random noise. The model determines the “predicted” noise from its training and subtracts that from the actual image. It looks something like this:

One key thing to note is that the model denoises the image in multiple steps. So it doesn’t subtract all of the noise at once. At each step the model looks at the image, estimates the noise, then subtracts a percentage of that estimated noise. This allows the model to hone in on an image rather than trying to create something from nothing in one shot. The amount of noise subtracted at each step is determined by the noise schedule, which can vary depending on the particular model being used. Some more customizable models, like Stable Diffusion, allow the user to pick from various noise schedules.

The image dataset used for training is obviously very important. If you only train the model on images of cats, it will be very good at creating cats but that will be the only thing it produces from noise.

The captioning of the training images is also incredibly important. At each step of training the model is given an image with some amount of random noise added along with a caption describing the original image.

So you might have a noisy image of a cat with the caption “painting of a cat by Vincent Van Gogh” (I’m sticking with cats for consistency

With these three images, the model learns four concepts: dogs, cats, the style of Vincent Van Gogh, and the style of Claude Monet. Now extrapolate that over hundreds of millions of images, and you can begin to see how these models learn so many concepts. The LAION dataset used to train Stable Diffusion consists of 5 billion images and captions.

quote:

Does AI collect images from around the web and piece it together into a new image or is every element generated?

I think I answered this above but it’s probably most accurate to say that the AI creates images based on knowledge it gained from images on the web.

quote:

For example, would you ever see yourself in an image generated by AI?

Only if there were enough images of you in the training dataset to actually influence image generation. Even then, you would have to prompt for it. If you Google image search your name, do you see a page full of your pictures? If not then.. probably not.

quote:

Is every person, building fake unless you specify like, say the White House?

Good question. If you prompt for “a woman,” you will get the AI’s generic vision for what a woman looks like based on its training data. So it will be some sort of average across all images that were captioned as “woman.” In this way you can actually get an idea of what the overall training data looks like. Prompting “woman” on a model trained from magazine clippings will look very different than the same prompt on a model trained from Facebook images, both in overall style and the appearance of the women themselves.

If you prompt “Jennifer Connelly” you will probably get an image that looks like Jennifer Connelly because her pictures are all over the web. If you prompt “Jennifer” you will get some sort of average approximation of all of the Jennifers in the training data, which will be skewed toward the Jennifers that show up most often in that data.

This post was edited on 10/15/23 at 1:52 pm

Posted on 10/15/23 at 1:29 pm to Bamafig

quote:

essage

Help me understand AI

Skynet is a generation away

Posted on 10/15/23 at 1:32 pm to BigPerm30

quote:

We’re not in true AI.

What do you mean?

Posted on 10/15/23 at 1:36 pm to Bamafig

It's artificial, in the truest sense of the word. It's only as good as what information is plugged in to begin with.

Posted on 10/15/23 at 1:39 pm to blue_morrison

That applies to us too, though. We learn, notice patterns, and react to stimuli, based entirely on our past experiences.

AI will never be worse than it is now.

AI will never be worse than it is now.

Posted on 10/15/23 at 2:19 pm to Bamafig

Data scientist are now asking AI to improve the AI code. Up until a year ago technology progressed in an arch. With AI enhancing its own programming code it’s going exponentially get better and more efficient. It will create faster and more efficient chips as well. It’s fascinating and scary at the same time.

AI alarmist said 2 things in an article I read last week. It should have never been connected to the internet and never should have been given access to its own code.

AI alarmist said 2 things in an article I read last week. It should have never been connected to the internet and never should have been given access to its own code.

Popular

Back to top

20

20